Types of Agentic AI

Insights

Table Of Content

TL;DR

Why Agent Type Is the First Decision That Matters?

What Are Agentic AI Agents?

Standard Classification of AI Agents

Advanced Agentic AI Types for Enterprise Environments

Key Differences Between Agent Types

How to Choose the Right Agent Type for Your Business

Real Business Use Cases by Agent Type

Common Mistakes in Agentic AI Deployment

How S3Corp Approaches Agentic AI Development

Conclusion: The Type Determines the Outcome

FAQ on Types of Agentic AI

Types of Agentic AI: Definitions, Examples, and Business Use Cases

A practical guide to the classification of agents in AI—covering definitions, examples, and a decision framework to help business leaders match the right agent type to the right problem

24 Apr 2026

TL;DR

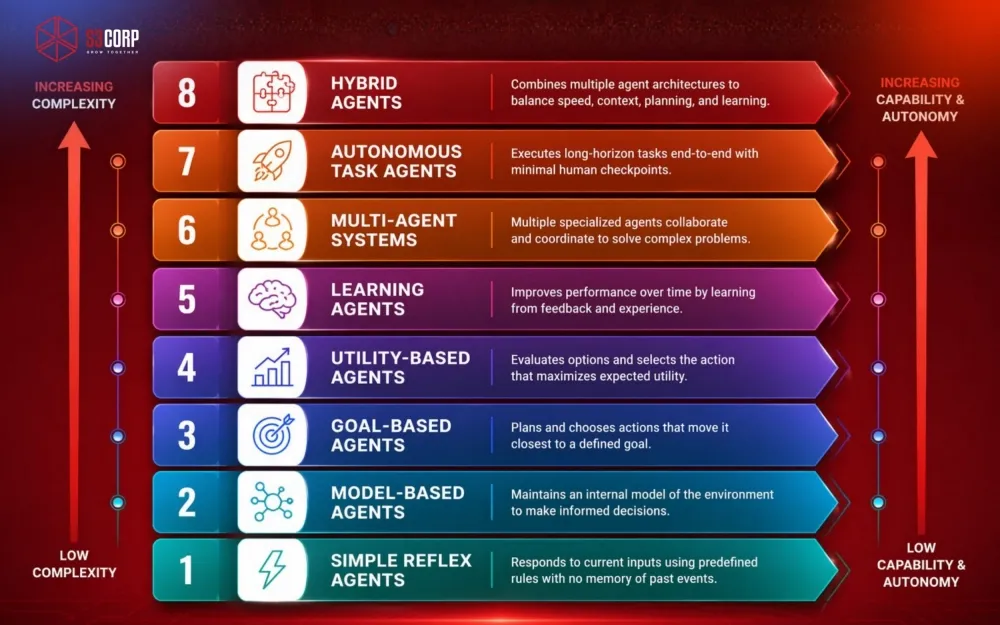

Agentic AI systems are classified into five foundational types — Simple Reflex, Model-Based, Goal-Based, Utility-Based, and Learning — each suited to a different level of decision complexity. Modern enterprise deployments extend these into three advanced architectures: Multi-Agent Systems (parallel coordination across specialized agents), Autonomous Task Agents (end-to-end execution of long-horizon goals), and Hybrid Agents (layered combinations of multiple types in a single system).

There is no universally best type. Choosing correctly depends on three factors:

- Problem structure — rule-governed tasks suit reflex agents; optimization problems suit utility-based; improvement over time suits learning agents

- Data readiness — learning and utility-based agents require clean, sufficient data; reflex and model-based agents are more forgiving of immature data environments

- Risk and cost tolerance — more autonomous architectures deliver higher capability but introduce higher latency, operating cost, and security exposure (including agentic loop risks and prompt injection vulnerabilities)

The matching exercise — problem type to agent type, done before a line of code is written — is what separates AI implementations that scale from ones that stall.

Bottom line: There is no universally best agent type. Match the architecture to the problem, the data, and the risk profile — in that order. Start with simpler types, prove value, then scale toward autonomy.

Not sure which agent type fits your business?

Get a quick architecture recommendation based on your use case, data, and risk level.

Why Agent Type Is the First Decision That Matters?

Agentic AI refers to artificial intelligence systems that can perceive their environment, make decisions, and take actions autonomously to achieve defined goals — without requiring a human to prompt every step. If you have been watching the rapid rise of AI across enterprise operations, you have probably noticed the term being used interchangeably with "autonomous agents" or "intelligent agents." Same family, different levels of capability.

This guide cuts through the noise. You will learn the standard classification of AI agent types, how each one works, and — critically — which type fits which business problem. Whether you are evaluating your first AI deployment or rearchitecting a full automation stack, understanding these distinctions is the starting point for any strategic approach.

For the broader strategic picture, start with our Agentic AI on what agentic AI means for enterprise.

What Are Agentic AI Agents?

Before breaking down types, it helps to be precise about what an "agent" actually is in the AI context.

An intelligent agent is a software entity that perceives inputs from its environment, processes those inputs according to some internal logic, and produces outputs — decisions or actions — that affect that same environment. The key word is autonomy. Unlike traditional automation, which follows a fixed script, an agent makes judgment calls.

Three characteristics define every agentic AI system:

- Autonomy — The agent acts without continuous human instruction.

- Perception — It receives data from its environment (APIs, databases, sensors, user inputs).

- Decision-making — It evaluates options and selects actions based on goals, rules, or learned patterns.

The critical difference between traditional automation and agentic AI is this: traditional automation follows a fixed script. A rule fires, an output is produced. Agentic AI, by contrast, can handle ambiguity, adapt to new inputs, and—depending on the agent type—improve over time. A rule-based chatbot routes tickets. A learning agent builds a progressively better picture of customer intent and adjusts its behavior accordingly.

Traditional Automation vs Agentic AI

|

Feature |

Traditional Automation |

Agentic AI |

|

Logic |

Fixed rules |

Dynamic decisions |

|

Adaptability |

Low |

High |

|

Learning |

None |

Continuous |

That distinction matters enormously when you are scoping a project or evaluating vendors.

Standard Classification of AI Agents

Standard Classification of AI Agents

The academic and engineering literature converges on five foundational types. These are not competing products — they are conceptual blueprints that real-world systems are built from.

Simple Reflex Agents

A simple reflex agent maps a perceived input directly to a predefined action. If condition X, do action Y. No memory of what happened before.

- How it works: The agent reads the current state of the environment and checks it against a pre-built rule table. When a condition matches, it fires the corresponding response.

- Example: A rule-based customer support chatbot that detects the word "refund" and routes the conversation to the billing queue. It does not know what the customer said two messages ago, nor does it care—it fires a rule.

- Limitation: These agents break instantly when reality diverges from the rulebook. They cannot handle edge cases, evolving language, or any scenario their designers did not anticipate. For narrow, stable workflows with low variability, they work well. Anywhere else, they become a liability.

Model-Based Agents

A model-based agent maintains an internal representation of the world — essentially a working memory of how the environment has evolved over time. This allows it to handle partially observable environments where the current input alone is insufficient to determine the right action.

- How it works: The agent builds and updates a model of its environment using a combination of the current input and prior states. Decisions are made against that evolving model, not just the immediate snapshot.

- Example: An IT system monitoring tool that tracks server health over time. It does not just respond to a single CPU spike; it cross-references current load against historical baselines, recent deployment logs, and network anomalies to decide whether to trigger an alert or absorb the noise.

- Limitation: The internal model is only as accurate as the data feeding it. If the environment changes faster than the agent can update its representation — say, during a sudden infrastructure failure or a spike in anomalous traffic — the model lags reality and decisions degrade. Keeping that internal state synchronized with a live, high-velocity environment adds engineering overhead that teams often underestimate at the design stage.

Model-based agents are the minimum viable option for any environment where context shifts between interactions. For enterprises running complex infrastructure, this layer of memory is non-negotiable.

Goal-Based Agents

Goal-based agents introduce explicit objectives. Instead of matching conditions to responses, they evaluate multiple possible actions and choose whichever one moves them closest to a defined goal.

- How it works: The agent holds a goal state. At each decision point, it uses search or planning algorithms to project the consequences of available actions and selects the most goal-aligned path.

- Example: Workflow automation engines that sequence document approvals, data validations, and notifications across a multi-department process. The goal is "complete the approval chain." The agent figures out the optimal path to get there, even if some steps are delayed or need rerouting.

- Limitation: Goal-based agents depend on the goal being precisely and completely defined upfront. Vague objectives produce poor planning. Shifting objectives mid-execution — common in real business environments — require the agent to replan from scratch, which can introduce latency or conflicting actions. They also struggle when the action space is very large, as exhaustively evaluating every path becomes computationally expensive.

Goal-based agents are where agentic AI starts to feel genuinely autonomous. They can handle multi-step processes that would otherwise require a project manager to babysit. This architecture is central to many modern Web Application Development projects where business logic is complex and conditional.

Utility-Based Agents

Goal-based agents ask "will this action reach the goal?" Utility-based agents ask a harder question: "which of all goal-reaching actions produces the best outcome?" Rather than simply reaching a goal, it finds the best way to reach it by scoring outcomes against a utility function that quantifies tradeoffs.

- How it works: Each possible outcome is assigned a utility score. The agent selects the action that maximizes expected utility, factoring in probabilities where the outcome is uncertain.

- Example: Dynamic pricing systems used in e-commerce and travel. The agent evaluates hundreds of variables — inventory levels, competitor pricing, demand signals, margin targets — and sets a price that optimizes revenue. It is not just finding a valid price; it is finding the best

- Limitation: Designing a utility function that accurately reflects real business priorities is harder than it looks. If the function is even slightly miscalibrated — overweighting short-term revenue against customer lifetime value, for instance — the agent will optimize aggressively for the wrong outcome. It will do exactly what you told it to do, not what you meant. That gap between specification and intent is the most common source of failure in utility-based deployments.

Utility-based agents are powerful, but they require clearly defined optimization criteria. Ambiguous utility functions produce confusing and sometimes damaging outputs—a lesson many early AI pricing experiments learned the hard way.

For businesses in E-Commerce and Retail or fintech, utility-based logic often sits at the heart of the most commercially impactful AI deployments.

Learning Agents

Learning agents are the most architecturally sophisticated of the five standard types. It improves its own performance through feedback. It has a performance element (the active decision-maker), a critic (which evaluates outcomes), a learning element (which updates behavior based on the critique), and a problem generator (which explores new strategies).

- How it works: A learning agent consists of four components — a performance element (makes decisions), a critic (evaluates those decisions against a standard), a learning element (updates the model based on critic feedback), and a problem generator (proposes new experiences to explore).

- Example: Recommendation engines on streaming platforms, e-commerce sites, or content platforms. The more a user interacts, the more accurately the agent predicts what they want — because it continuously refines its model based on engagement signals.

- Limitation: Learning agents are entirely dependent on data quality and volume. A model trained on biased, incomplete, or unrepresentative data will learn — and reliably reproduce — those flaws at scale. They also require significant time and infrastructure investment before they produce reliable outputs, making them a poor fit for organizations that need quick results or lack the data pipeline maturity to support continuous feedback loops.

Learning agents are the backbone of most modern AI use cases in consumer tech—from Netflix recommendations to Google's search ranking, from fraud detection in FinTech Solutions to personalized education paths in EdTech platforms. The feedback loop is their engine; data quality is their fuel.

Advanced Agentic AI Types for Enterprise Environments

The five classical types represent a spectrum of capability. Modern enterprise systems, however, often require architectures that go beyond a single agent type.

Multi-Agent Systems

A multi-agent system (MAS) is an environment where multiple independent agents operate simultaneously, either cooperatively or competitively, to solve problems that are too large or too complex for a single agent.

Think of it as the difference between one highly skilled analyst and a full team of specialists — each handling their domain, sharing information, and arriving at a collective result faster than any individual could.

- How it works: Each agent in the system perceives its own local environment and acts according to its own logic — reflex, goal-based, learning, or any combination. A coordination layer (which may itself be an agent) manages communication between agents, resolves conflicts when their actions overlap, and aggregates outputs into a coherent result. Agents can operate in parallel, dramatically reducing the time needed to complete complex, multi-domain tasks. The coordination layer is where the real engineering complexity lives. It handles three distinct responsibilities:

- Data exchange mechanisms — Agents communicate through shared message queues, structured API calls, or a shared memory store (sometimes called a "blackboard"). The choice matters: message queues are asynchronous and resilient but introduce latency; shared memory is fast but requires careful locking to prevent race conditions when multiple agents read and write simultaneously.

- Conflict resolution — When two agents produce contradictory outputs or attempt to act on the same resource, the coordination layer applies a resolution strategy. Common approaches include priority ranking (one agent's output takes precedence), voting (the majority position wins when multiple agents assess the same input), and negotiation protocols (agents exchange bids or proposals until consensus is reached).

- Result aggregation — Once individual agents complete their subtasks, the coordination layer synthesizes outputs into a single coherent response or action. This may involve merging data, resolving partial overlaps, or applying a final validation step before the aggregated result is passed to the next stage or returned to the user.

- Example: In supply chain management, separate agents handle demand forecasting, procurement, logistics routing, and supplier communication — each optimized for its domain, all coordinated toward delivery performance. When a shipping delay is detected, the logistics agent flags it, the procurement agent adjusts reorder timing, and the communication agent notifies relevant suppliers — simultaneously, without a human orchestrating each handoff.

- Limitation: Coordination is the hidden cost of multi-agent systems. The more agents operating in parallel, the higher the risk of conflicting actions, communication bottlenecks, and cascading failures when one agent misbehaves. Designing reliable inter-agent communication protocols and conflict resolution mechanisms is non-trivial — and debugging a MAS when something goes wrong is significantly more complex than debugging a single-agent system.

Multi-agent systems are where scalable architecture for AI becomes critical. Poorly designed inter-agent communication creates bottlenecks and conflict states that are hard to debug. For organizations looking to build these systems, working with an experienced software outsourcing services partner can compress the learning curve significantly.

Autonomous Task Agents

Platforms like AutoGPT, CrewAI, and enterprise variants built on LangGraph are making this category increasingly accessible. But implementation quality varies enormously, and the risk profile demands rigorous testing before production deployment.

Autonomous task agents are designed to complete long-horizon, multi-step tasks with minimal human checkpoints. They break a high-level objective into subtasks, execute each one, handle errors, and deliver a final output. These are the systems that most people imagine when they hear "AI agent" in 2025–2026.

- How it works: The agent receives a high-level goal and decomposes it into an ordered sequence of subtasks. It then executes each subtask using available tools — APIs, code interpreters, web browsers, file systems — monitoring outcomes at each step. When a subtask fails or produces an unexpected result, the agent re-plans rather than stopping. The loop continues until the final deliverable is produced or a defined exit condition is met.

- Example: An engineering team deploying an autonomous agent to audit a legacy codebase for security vulnerabilities. The agent identifies files, runs static analysis tools, cross-references against known Common Vulnerabilities and Exposures (CVEs), prioritizes findings by severity, and generates a structured report—all autonomously.

- Limitation: Autonomous task agents are powerful precisely because they operate with minimal checkpoints — which also means errors can compound across steps before a human notices. They require robust observability tooling and well-scoped task boundaries. Deploying them against open-ended, ambiguous objectives without those guardrails is the fastest way to erode trust in the system.

For engineering teams and Software Outsourcing Services, autonomous task agents represent the most direct path to meaningful throughput gains on repeatable, well-defined workflows.

Hybrid Agents

Most production AI systems are, in practice, hybrid agents — they combine reactive rules (for speed and safety), internal models (for context), goal-directed planning (for complex sequences), and learning loops (for improvement over time).

- How it works: A hybrid agent is assembled by layering multiple agent architectures into a unified decision pipeline. Typically, a fast reflex layer handles high-frequency, low-stakes decisions instantly. A model-based or goal-based layer processes more complex conditions that require context or planning. A learning layer sits above both, continuously refining the thresholds and parameters that govern the other layers based on observed outcomes. The result is a system that is simultaneously fast, contextual, and self-improving.

- Example: A fraud detection system in a payments platform. When a transaction arrives, a reflex layer checks it against known fraud signatures and blocks obvious matches in milliseconds. A model-based layer then scores the transaction against recent account behavior. If the score is borderline, a goal-based layer initiates a step-up verification flow. All three layers feed outcome data to a learning component that recalibrates scoring thresholds weekly as fraud patterns evolve.

- Limitation: Flexibility comes at a cost. Hybrid agents are significantly harder to design, test, and maintain than any single-type agent. When a decision goes wrong, attributing the failure to the correct layer — reflex, model-based, or learning — requires deep observability tooling and engineering expertise. Without that investment, hybrid systems can become opaque black boxes that are difficult to audit, explain to stakeholders, or safely update. Complexity should be earned, not assumed.

Pure architectures are useful for classification and learning — but real enterprise problems rarely fit neatly into a single box. Hybrid agents are what scalable architecture looks like in practice. They give organizations the control of rule-based systems and the adaptability of learning systems in a single deployable product.

Key Differences Between Agent Types

|

Agent Type |

Memory |

Adaptability |

Decision Logic |

|

Simple Reflex |

None |

None |

Condition → Action rules |

|

Model-Based |

Short-term state |

Low |

Rules + environment model |

|

Goal-Based |

Goal + state |

Medium |

Planning toward an objective |

|

Utility-Based |

Goal + state + preferences |

Medium-High |

Maximize expected utility |

|

Learning |

Persistent, evolving |

High |

Feedback-driven model updates |

|

Multi-Agent |

Distributed across agents |

Very High |

Cooperative or competitive coordination |

|

Autonomous Task |

Task memory + tool outputs |

High |

Hierarchical subtask decomposition |

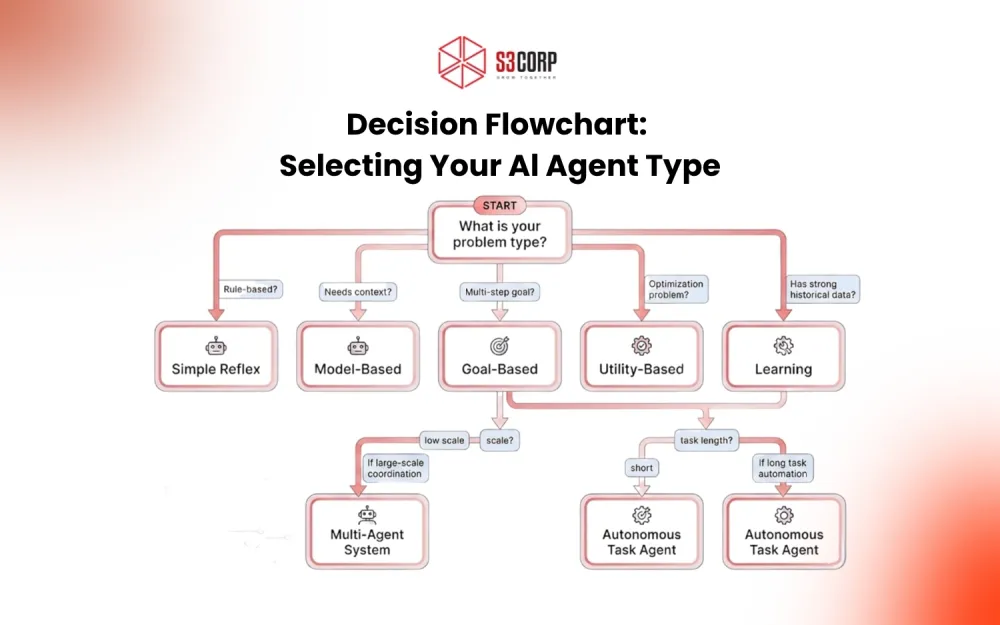

How to Choose the Right Agent Type for Your Business

The type of agent that creates value for your organization depends on three practical factors — not on which technology sounds most impressive.

Match the Agent to the Problem Type

|

Problem Type |

Best Agent Match |

Real-World Agent Examples |

|

Fixed rules, low variability |

Simple Reflex |

Zendesk rule-based routing, IVR phone trees, basic spam filters |

|

Context-dependent decisions |

Model-Based |

Datadog anomaly detection, AWS CloudWatch alarms, network intrusion monitors |

|

Multi-step workflows with a clear end state |

Goal-Based |

Zapier multi-step automations, ServiceNow workflow engines, robotic process automation (RPA) bots |

|

Trade-off optimization across multiple variables |

Utility-Based |

Airbnb/Uber surge pricing engines, programmatic ad bidding systems, inventory reorder optimizers |

|

Improving accuracy from user or outcome data |

Learning |

Spotify Discover Weekly, Netflix recommendations, Salesforce Einstein lead scoring |

|

Distributed, large-scale coordination |

Multi-Agent |

AutoGen multi-agent pipelines, CrewAI orchestration frameworks, enterprise supply chain platforms |

Cost and Latency: The Two Factors Most Teams Overlook

Agent architecture does not just determine capability — it determines operating cost and response speed. These two dimensions often break tie-breakers when multiple agent types could technically solve the same problem.

|

Agent Type |

Typical Latency |

Relative Cost per Decision |

Notes |

|

Simple Reflex |

< 10ms |

Very Low |

Runs on deterministic rules; no model inference required |

|

Model-Based |

50–200ms |

Low–Medium |

State maintenance adds overhead; scales with model complexity |

|

Goal-Based |

200ms–2s |

Medium |

Planning algorithms are computationally heavier; depends on action space size |

|

Utility-Based |

100ms–1s |

Medium–High |

Scoring many options in parallel; cost rises with variable count |

|

Learning |

Variable |

High (training) / Medium (inference) |

Training cost is upfront and significant; inference at scale is manageable |

|

Multi-Agent |

500ms–5s+ |

High |

Coordination overhead multiplies with agent count; parallel execution helps but adds infra cost |

|

Autonomous Task |

Seconds–minutes |

Highest |

Multiple LLM calls, tool invocations, and replanning loops per task |

The practical implication: if your use case demands sub-100ms responses — real-time fraud screening, live content moderation, instant chatbot replies — reflex and model-based agents are the only architectures that reliably deliver at that speed. Learning and autonomous task agents are better suited for asynchronous workflows where a slightly longer processing window is acceptable in exchange for higher decision quality.

Assess Your Data Readiness

Learning agents require clean, labeled, historical data to train on — and continuous feedback loops to improve. If your data is sparse, inconsistent, or siloed, starting with a learning agent will produce disappointing results and erode stakeholder confidence in AI adoption.

Goal-based and utility-based agents need well-defined problem spaces and clear success metrics. Reflex and model-based agents are more forgiving of limited data environments, making them reasonable starting points for organizations early in their AI journey.

Factor in Risk Tolerance

Autonomous task agents and multi-agent systems operate with significant independence. In regulated industries—healthcare, fintech, financial services—this independence requires well-designed override mechanisms and audit trails. The more autonomous a system, the more critical it is to implement observability, rollback mechanisms, and human-in-the-loop checkpoints for high-stakes decisions.

For organizations in Fintech or Healthcare verticals, agent governance is not optional. It is a compliance requirement.

Beyond general caution, there are specific technical and security risks that every team deploying agentic AI needs to account for explicitly:

- Agentic Loop Protection: Autonomous and multi-agent systems can enter runaway execution loops — repeatedly invoking tools, calling APIs, or spawning sub-agents — if exit conditions are poorly defined or a task enters an unexpected state. Without hard limits on iteration count, execution time, and resource consumption, a single misconfigured agent can exhaust compute budgets or lock critical systems in minutes. Every agentic deployment needs a circuit breaker: a maximum step count, a timeout threshold, and an automatic escalation path when either is hit.

- Prompt Injection Attacks: When an agent interacts with external data sources — web pages, documents, user-submitted content, third-party APIs — that content can contain embedded instructions designed to hijack the agent's behavior. A malicious website could instruct a browsing agent to exfiltrate data. A crafted document could redirect a document-processing agent to ignore its original task. Prompt injection is the agentic equivalent of SQL injection, and it is currently one of the least-defended attack surfaces in enterprise AI deployments. Mitigations include input sanitization, strict tool permission scoping, and sandboxed execution environments.

- Privilege Escalation and Tool Scope Creep: Agents granted broad tool access — file systems, APIs, email clients, databases — can take actions far outside their intended scope if instructions are ambiguous or the agent reasons its way into "helpful" side effects. Apply the principle of least privilege: every agent should have access to only the tools and data sources strictly necessary for its task, scoped at the most granular level the platform supports.

- Auditability and Explainability: In regulated industries — finance, healthcare, legal — every decision an autonomous system makes may need to be explained, logged, and defended. Before deploying any agent with real-world consequences, confirm that your architecture produces a traceable decision log. Multi-step autonomous agents that rely on LLM reasoning are particularly difficult to audit after the fact unless logging is built into the execution pipeline from the start.

A practical framework: deploy reflex or model-based agents in production first. Measure performance, build trust, then graduate to more autonomous architectures as your team's confidence and your infrastructure's maturity both grow. Good software testing services coverage at each stage — including adversarial testing for prompt injection and loop conditions — is non-negotiable.

Turn your use case into a working AI agent

We help you design, test, and deploy the right agent architecture — from simple automation to multi-agent systems.

Real Business Use Cases by Agent Type

Understanding classifications is only useful when you can map them to outcomes. Here is how these agent types perform across common enterprise functions.

Customer Support (Simple Reflex → Hybrid): A global e-commerce brand starts with reflex agents for FAQ deflection—reducing first-contact load by routing inquiries automatically. As the data matures, they layer a learning agent to detect sentiment shifts and escalation signals, creating a hybrid that handles 70–80% of contacts without human intervention.

Sales and Revenue (Learning Agents): Predictive lead scoring, churn prediction, and next-best-action recommendations all depend on learning agents ingesting CRM history, engagement signals, and closed-won data. Organizations that deploy this architecture consistently report pipeline quality improvements within two to three quarters.

Supply Chain & Logistics (Utility Agents + Multi-Agent Systems): Optimizing delivery routes, balancing inventory across warehouses, and coordinating supplier lead times are inherently multi-variable problems. Utility-based agents handle trade-off optimization; multi-agent systems coordinate across organizational boundaries.

Software Engineering (Autonomous Task Agents): Code generation, test writing, pull request summarization, and documentation are all being accelerated by autonomous task agents. Teams that integrate these into existing CI/CD pipelines through DevOps Services are seeing measurable reductions in cycle time — particularly for routine, well-defined engineering tasks.

Engineering & DevOps (Autonomous Task Agents): Development teams use autonomous agents integrated with their DevOps Services pipeline to run regression tests, flag regressions, and generate incident summaries—eliminating hours of manual investigation per incident. Organizations looking to implement this can explore Web Application Development Services and Full-Lifecycle App Development frameworks that integrate agentic tooling from the start.

Healthcare Operations (Model-Based & Goal-Based Agents): In HealthCare Solutions, model-based agents support clinical decision tools that track patient history across encounters. Goal-based agents power care coordination workflows where the objective is measurable — discharge readiness, medication adherence, follow-up completion.

Common Mistakes in Agentic AI Deployment

Getting the classification right is only half the challenge. These are the mistakes that consistently undermine AI initiatives — even when the technology choice is correct.

- Choosing the Wrong Agent for the Problem. Deploying a learning agent for a problem that has clear rules is expensive and slow. Conversely, using a simple reflex agent for a dynamic environment means it will fail under any condition it was not explicitly programmed for.

- Skipping the Data Foundation Learning agents are only as good as their training data. Launching without clean, representative, and sufficient data produces a system that learns the wrong patterns—and confidently.

- Over-engineering too early. Multi-agent systems are powerful — and expensive to build, test, and maintain. Many organizations start at the top of the complexity ladder when a well-tuned goal-based agent would deliver 80% of the value at 20% of the effort.

- Removing human oversight prematurely. Autonomous agents need guardrails, particularly in domains where errors carry financial, legal, or reputational consequences. Observability and human review mechanisms are not bureaucratic friction — they are what make autonomous systems trustworthy.

How S3Corp Approaches Agentic AI Development

With over 19 years of experience delivering software solutions for clients across North America, the UK, Singapore, and beyond, the team at S3Corp has watched the AI agent landscape shift from academic theory to enterprise standard.

The pattern is consistent: businesses that define the agent architecture before writing a line of code achieve faster time-to-value and fewer costly rearchitects downstream. That discipline — strategic approach first, implementation second — is the foundation of every AI engagement at S3Corp.

S3Corp works with product leaders and CTOs to design innovative solutions that start with the right agent classification, build on a solid data foundation, and apply optimizing cost and performance at every layer of the stack. Whether you are exploring a targeted automation for a single workflow or orchestrating a multi-agent system for enterprise operations, the work begins with a clear problem definition—not a technology choice.

Our teams span Mobile App Development, Web Application Development Services, Software Testing Services, and full-cycle delivery, which means we can support the full agent implementation journey—from architecture design through production deployment and ongoing iteration.

If you are mapping your AI strategy and want a second opinion on which agent architecture fits your use case, contact the S3Corp team for a working session with our technical leads.

Ready to deploy agentic AI in your operations?

Work with a team that has delivered production-grade systems across industries.

Conclusion: The Type Determines the Outcome

No single agent type is universally superior. A learning agent deployed in an environment with no reliable data will underperform a well-designed simple reflex system. A reflex agent applied to a dynamic, multi-variable problem will fail the moment reality moves outside its rule set.

The discipline is in the matching: problem type to agent type, data readiness to learning requirements, risk tolerance to autonomy level. That matching exercise—done rigorously before a line of code is written—is what separates AI implementations that scale from ones that stall.

FAQ on Types of Agentic AI

What are the 5 types of intelligent agents?

The five standard types of intelligent agents in AI are: Simple Reflex Agents, Model-Based Agents, Goal-Based Agents, Utility-Based Agents, and Learning Agents. Each represents a different level of decision-making sophistication, from basic condition-action rules to feedback-driven self-improvement.

What is the difference between goal-based and utility-based agents?

A goal-based agent asks: "Will this action achieve my goal?" It selects any action that reaches the objective. A utility-based agent asks: "Which action produces the best outcome?" It quantifies and compares all goal-reaching options using a utility function, optimizing for maximum value rather than simple success.

Are agentic AI and intelligent agents the same thing?

They overlap significantly, but "intelligent agents" is the broader academic classification. "Agentic AI" is the more recent industry term, typically applied to modern systems capable of long-horizon autonomous task execution — often combining multiple agent types in a single architecture. All agentic AI systems are intelligent agents, but not all intelligent agents qualify as fully agentic.

Which AI agent type is best for business?

There is no universal answer. The best agent type depends on your problem structure, data maturity, and risk tolerance. Simple reflex agents work well for narrow, rule-governed tasks. Learning agents add value where historical data is rich and continuous improvement matters. Utility-based agents excel at optimization problems. For complex, distributed challenges, multi-agent systems often deliver the highest impact — at the cost of greater architectural complexity.

How many types of AI agents exist?

The five foundational types (Reflex, Model-Based, Goal-Based, Utility-Based, Learning) are standard across AI literature. In applied enterprise contexts, these expand to include Multi-Agent Systems, Autonomous Task Agents, and Hybrid Agents — which combine multiple foundational types into a single deployable system.

_1746790956049.webp&w=384&q=75)

_1746790970871.webp&w=384&q=75)